In my preview post about implementing preview features in asp.net core, I explained how to introduce the concept of feature flags in your application and manage it with the default appsettings.json.

Managing your features from appsettings is not the best approach as it requires application restarts which will distrupt the application users.

In this post, I will explain how to do the configuration with a tool that was built just for this.

Azure App Configuration has feature management feature that allows you to manage your features, turn it on or off and set the filters for each feature flag. It also enables auto refresh which basically allows you to change a feature and this change will reflect immediately in your application.

Register Azure App Configuration as a Configuration Source

Asp.net core supports multiple configuration sources. When you call the CreateDefaultBuilder method in Program.cs, it automatically registers the following configuration sources for your application

appsettings.json

appsettings.{environment}.json

user secrets

environment variables

command line arguments

The registration happens in the same order above. You can have a look at the source code in this link If a specific key exists in a configuration source, you can override its value by adding it in the next configuration source. Ex: If the key ConnectionStrings:DefaultConnection exist in appsettins.json and also exist in user secrets, the one in user secrets will be used. This is good to override some values in your local dev environment such as passwords and connection strings to avoid pushing it to your source control

To register azure app config as an additional config source, you can add the following in Program.cs after installing the package Microsoft.Azure.AppConfiguration.AspNetCore

Host.CreateDefaultBuilder(args)

.ConfigureAppConfiguration(config =>

{

var configuration = config.Build();

config.AddAzureAppConfiguration(azureAppConfig =>

{

var azureAppConfigConnectionString = configuration["AzureAppConfig:ConnectionString"];

azureAppConfig.ConfigureRefresh(refresh =>

{

refresh.SetCacheExpiration(TimeSpan.FromSeconds(5));

});

azureAppConfig.UseFeatureFlags();

azureAppConfig.Connect(azureAppConfigConnectionString);

});

})

.ConfigureWebHostDefaults(webBuilder =>

{

webBuilder.UseStartup<Startup>();

});

From line 2-15, you can see that we are configuration the application configuration by adding a new configuration source in line 5. In line 7, we are reading the connection string from the previous configuration sources. This way you can add your connection string in appsettings.json or user secrets, I have added it here in appsettings.json under the name AzureAppConfig:ConnectionString. From line 8-11, we are configuring the caching time for the configuration. If you configure it for 5 seconds, the keys from azure app config will be cached and no requests will be done to azure app config to get the latest updates. Line 12 simply asks Azure App Configuration SDK to include the features when it reads all configurations and finally we use the connection string to connect to the azure config instance.

Creating Azure App Config

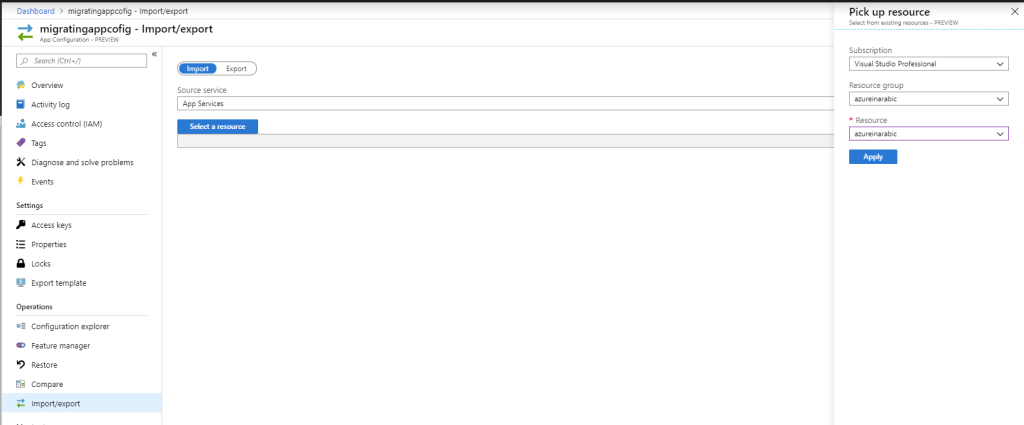

Log in to Azure Portal and create a new App Configuration resource. You can only create one free app configuration per subscription. Once it is created, go to Access Keys from the left menu and copy either the primary or secondary connection string and update your appsettings file

Now you should be all set. If you run the application and navigate to https://localhost:44375/PreviewFeatures, you should be able to see the page if your feature configuration allows it.

So far, we didn’t add any features to our Azure App Config instance yet, this means the application will fall back to the appsettings.json

Add Features in Azure App Configuration

To start managing your features from Azure App Config, Click Feature Manager from the left menu and Choose Add then type the feature name as PreviewFeatures and choose Off then click add

Now refresh the URL https://localhost:44375/PreviewFeatures, and if the feature PreviewFeatures was enabled in appsettings, it will still appear. So what are we doing wrong?

Enable Auto Refresh

By default, the Azure App Configuration does not do auto refresh in your asp.net core. You have to register a middleware that does that by adding the following line in the Configure method inside Startup.cs

app.UseAzureAppConfiguration();

Now, if you run the application again, the first time it starts, it will get the most recent values from Azure App Config and now you should see the PreviewFeatures controller not working. But if you enabled it again from azure portal by toggling the On/Off flag from the Feature Manager page, and wait for 5 seconds, you should refresh and see that the PreviewFeatures is now working again.

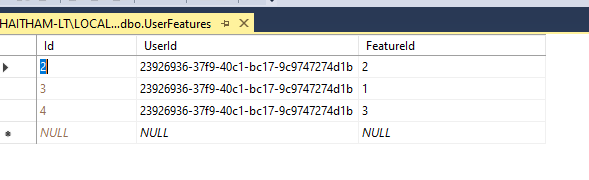

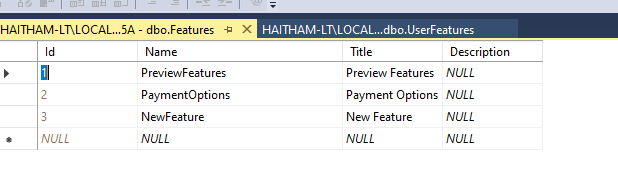

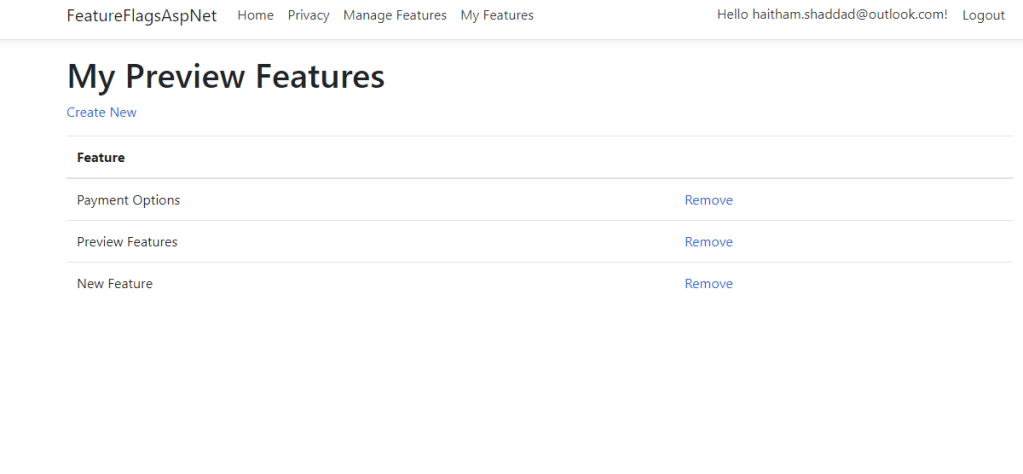

Notice that the toggle turns on or off the feature for everyone. If you need to use the feature filter, then click the 3 dots and choose Edit. Under Filters, click add and type the feature filter name, in this case UserFeatures which we created previously. Notice how the state changed from On/Off to Conditional. Now when you refresh, the filter will be executed to decide if the feature should be enabled or disabled for each user.

I have included all the updates on GitHub repo. Feel free to ask me any questions

You can also modify the URL and SSL settings.

You can also modify the URL and SSL settings.